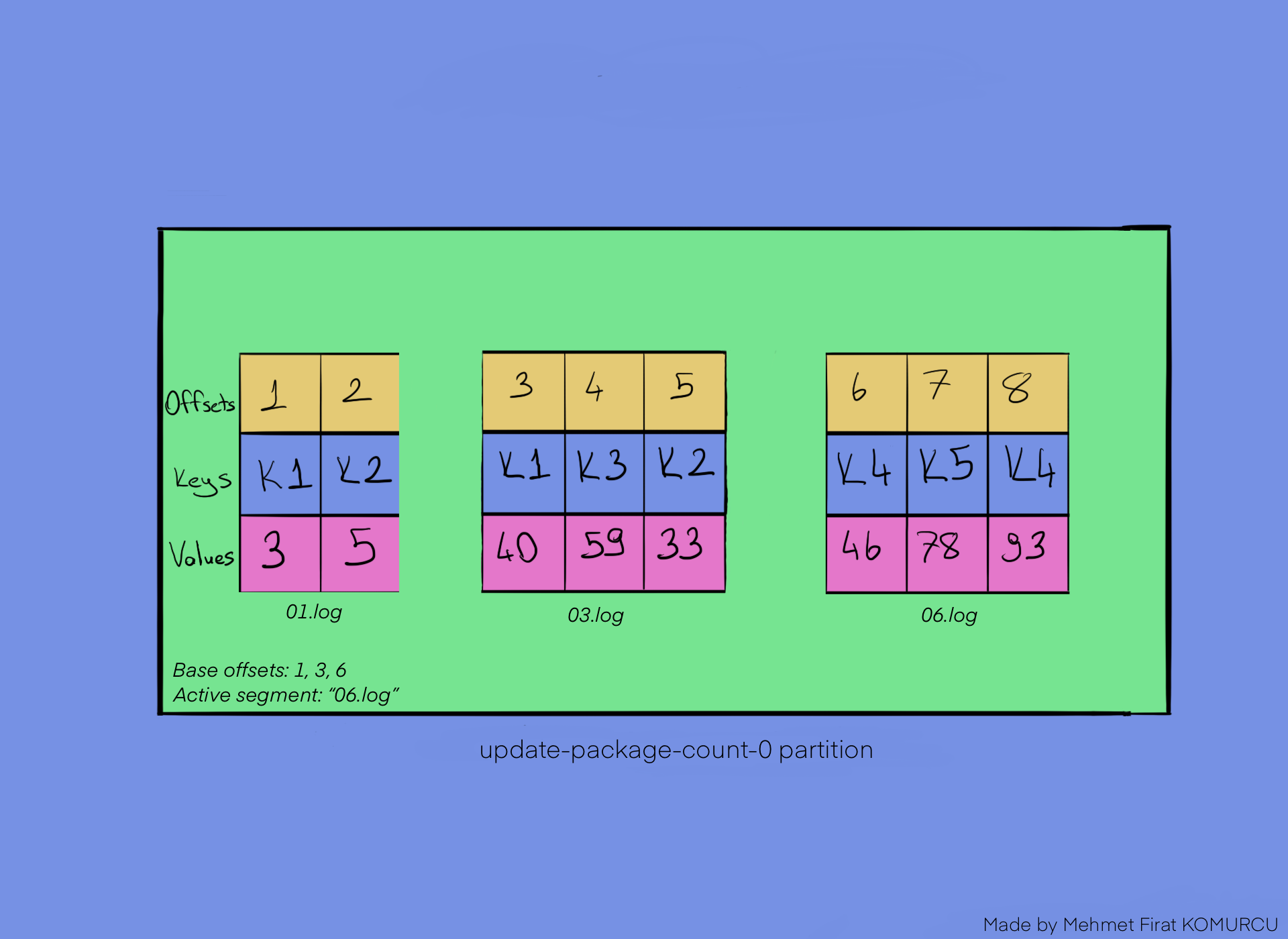

Librdkafka is a C++ language binding for Apache Kafka that many other client libraries depend on. That has changed with the release of librdkafka 1.0.0 But other language clients that depends on the C++ library librdkafka have not had support. The Apache Kafka brokers and the Java client have supported the idempotent producer feature since version 0.11 released in 2017. The idempotent producer feature addresses these issues ensuring that messages always get delivered, in the right order and without duplicates. So we can end-up with messages delivered twice and out-of-order. The message, but if we don’t resend then the message may essentially be lost.Īlso, resending messages can cause message ordering to go wrong.

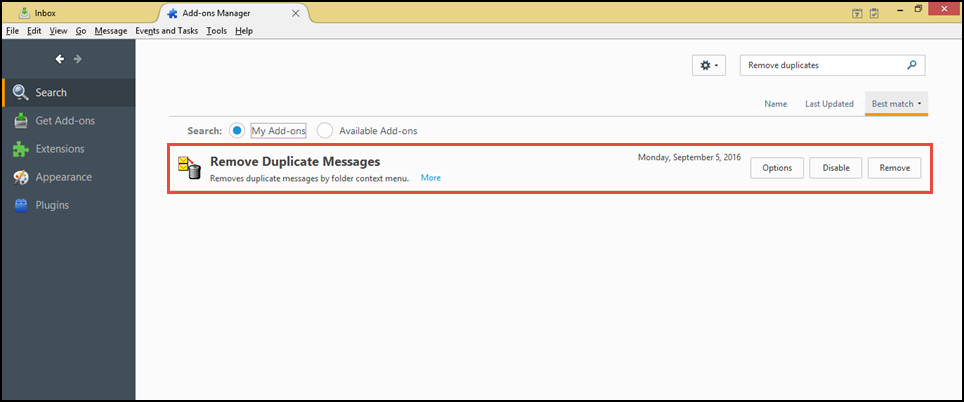

To the topic, or not, there is no way to know. The messages may have been successfully written When this happens, any messages that are pending acknowledgementsĬan either be resent or discarded. When a producer sends messages to a topic, things can go wrong, such as shortĬonnection failures. It is and why you might want to enable it. Thought it was the perfect time to cover the Idempotent Producer feature, what So with the release of librdkafka 1.0.0, we If you use Apache Kafka, and do not use Java, then you’ll If I have 5 different consumers each in a separate consumer group reading from one topic and this topic has 10 Bad records spread out over 500k messages and 2 consumers are critical and should fail if they receive bad records for further testing investigation, I manually have to reset the offset of those 2 consumers 10 time while re-processing the records because each bad record will cause them to stop.ĭeletion would be a applicable scenario for us right there and could be handled safely I guess.Ĭonfluent enterprise seems to have an option to „hard“ bind topics to avro schemas and reject Bad record, open source Kafka has nothing like this as far I know.The release of librdkafka 1.0.0 brings a new feature to those who are not on The replay scenario is exactly what I’m worrying about. If one batch only is 4 instead of 5 messages long, no problems should occur. This might cause some trouble and should not be done without consideration or course, but that’s the case for a lot of operations in kafka.ĭeleting shouldn’t at least lead to a failure because consumer’s get messages in micro batches. Thanks for your reply, I’m still wondering/curious why it’s technically not possible to delete a message by: This technique preserves the historical integrity of the original topic, while allowing you to separate the filtering/sanitation logic from the consumer. However, there are ways to consume a “sanitized” set of messages - for instance, by writing a consumer that re-publishes only the “valid” (or “interesting/relevant”) messages onto a “derived” topic. Timing issues: What if the command to delete a message comes right after it’s published (perhaps published by mistake): Do you send that message to consumers at all? What if the command to delete a message is received BEFORE THE MESSAGE ITSELF? One subscriber rebuilding its data store by replaying the events on the topic gets a different end-state on the replay Two subscribers to the same topic get different sets of messages To allow deleting of a message is to introduce many complex and potentially harmful edge case scenarios: No editing of messages that have been published. You said it yourself: Kafka is an append-only log.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed